The phenomenon of the public cloud is difficult to get your arms around. Since AWS kicked it off early in the century it has grown and evolved into a modern computing platform - creating the

Another reason that growth is stagnating is that CFOs have stepped in. Early in the cycle, CTO/CIO won the argument for productivity gains. Indeed, going to the cloud to learn the skill of the cloud is something

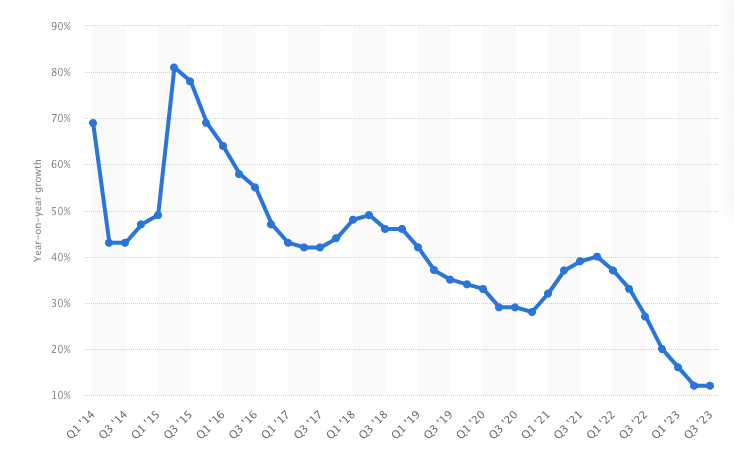

This depressed growth - even in the face of a gen AI boom that ostensibly drove usage up (because the hyperscalers had all of the GPU inventory). Here is AWS’s growth rate:

(Edit: following the publishing of this post Amazon posted earnings that returned AWS growth to 17%)

What doesn’t get a lot of press, but should, is the emerging nature of the CFO’s decision making and the partnership of CTO/CIO and CFO. Because the cloud operating model runs anywhere and is a proven concept - the CTO/CIO and CFO are free to look at colos and on-prem alternatives to the cloud.

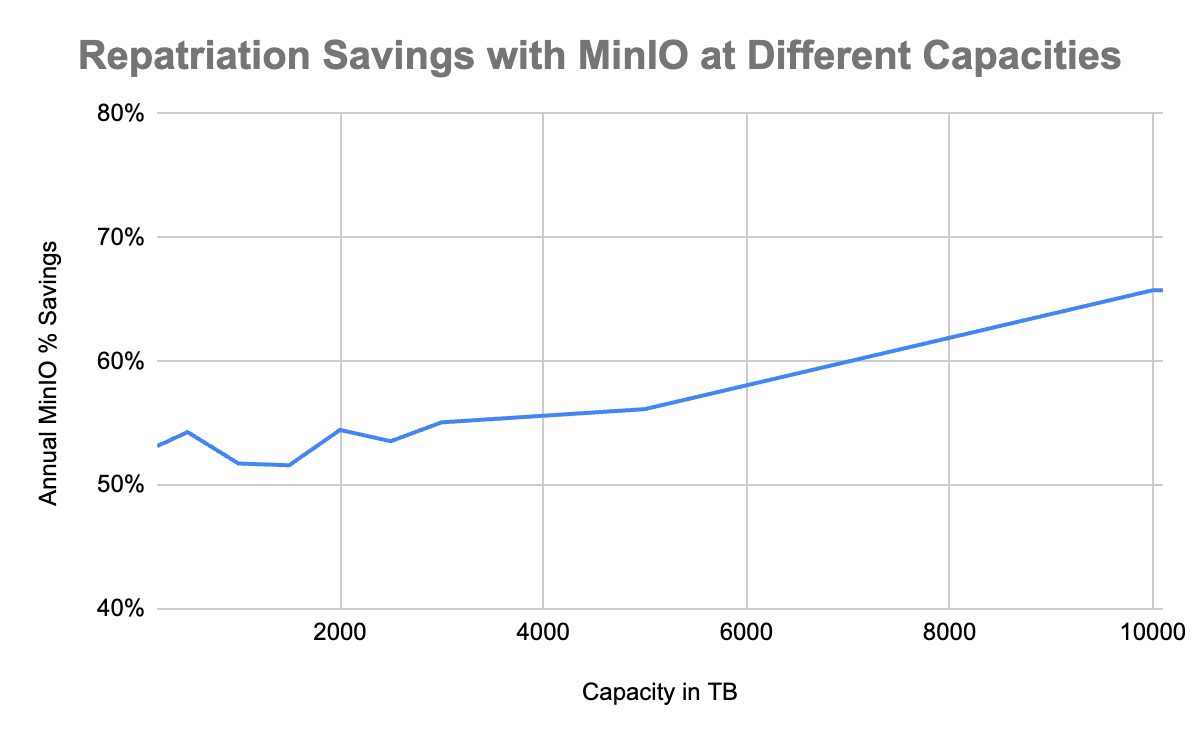

What they have realized is that the more you have on the cloud - the bigger the impact on the organization when you repatriate that workload to a colo or your own private cloud. Here is its visually:

These are massive savings and they get bigger the more you repatriate.

We have one customer, a leader in the cloud workload and endpoint security, threat intelligence, and cyberattack response space. They repatriated 500PB on a threat intelligence workload to an Equinix colo. The savings generated by that effort improved the gross margin of the ENTIRE business by between 2-3%. For a company with tens of billions of dollars in market cap this is a massive improvement in the valuation in the business. Needless to say, they doubled the repatriation goals and are now north of an exabyte.

This is just one example. One of the biggest streaming companies took a similar approach, moving their workload back from AWS. They reduced their costs by more than 50%.

Let’s explore what the core drivers of the savings are:

-

Reduction in data transfer/egress costs. This ranges from nearly 70% of overall costs on smaller workloads to 20% for 100 PB. Even though the percentage drops at the 100 PB level, the number is still material at nearly $3M per year. You can’t negotiate that number much. With MinIO - that number is zero.

-

The cost of S3 isn’t small either. Yes, you can tier to lower cost options and AWS continues to make that more attractive, but if you have to pull it out, the penalties are significant. At the 100 PB level, one would expect to be paying around $33M a year. With MinIO that number is going to be $4.3M. You can

check our math here . Sure you have to buy your own HW (we think you can get top of the line NVMe kit for $5M) but it is a drop in the bucket (we are at less than $9.4M for HW and SW). Add in some colo charges of $187K and your total is around $9.5M.

So would you rather spend $33M a year or spend $9.5M in year one and $5.1M in the remaining four ($4.3M plus 20% of HW replacement of $860K a year)?Your five year cost is $166M with AWS. Your MinIO + COTS + Colo cost is $30M. Those are real savings. More importantly, they are proven savings as evidenced by the teams at

More importantly, 100PB isn’t that much data in the age of AI.

It is more like the unit you should be thinking about. Ten units is what some of our most sophisticated customers buy, we have others with twenty units. They are posting on LinkedIn about

-

TCO is one. MinIO’s legendary simplicity means that enterprises can manage these exascale deployments with just a handful of resources. Our Enterprise Object Store features were built expressly for them. Things like

Observability for 10s of thousands of drives, orKey Management for billions of objects orCache for hundreds of servers. These are all designed to simplify what can become very complicated. -

Performance is another - and specifically performance at scale. It is easy to be fast at 200 TB. It is hard to be fast at 2EBs. That is unless you are architected to do so. Furthermore, enterprises want to be multi-modal on the application size at that scale. That means, AI, advanced analytics, application workloads and yes, the tried and true archival workloads.

-

Control is a third. Enterprises that want full control over their stack run them in a colo or on their private cloud. Don’t want your cloud provider looking in your buckets - don’t run on the public cloud. Don’t want your cloud provider’s security complexity but you desire that level of comfort - run privately. MinIO and Equinix for example are the cloud you control. Data sovereignty is another bullet here.

The overall point is that enterprises do not make decisions solely on costs. There are other considerations and if those considerations are not met, they will not make the move. A high performance, cloud-native object store offers you economic benefits, performance benefits, control benefits - and they compound with scale.

If you want to learn more and take advantage of our value engineering function to run your own models - reach out to us at